I am a computer engineer from Philadelphia. I have worked on engineering teams small, large, and huge.

Since 2024, I have been at the Center for Functional Fabrics as an E-textile Electrical Engineer, where I do embedded development and system design towards creating the next generation of textile devices.

Let’s get in touch! levsau.engineer@gmail.com

PRIOR WORK EXPERIENCE

iPhone Hardware Team → Apple

Ball Bonder Process Engineering → Kulicke & Soffa

Health Informatics → Children's Hospital of Pennsylvania

Functional Fabrics Research → Center for Functional Fabrics

Read more on my LinkedIn or Resume :^)

Publications

“Minimalist Neural Networks for Gesture Recognition on Wearable Capacitive Touch Textiles With Comparative User Study” – 2025 | ACM SUI ‘25 | pdf

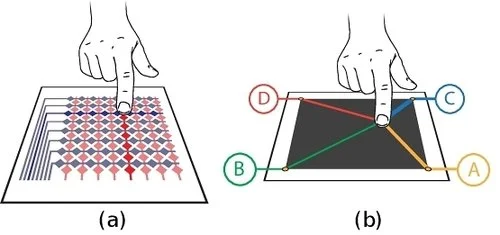

Smart textiles embedded with capacitive touch sensors offer significant potential for intuitive gesture-based interaction, yet recognizing complex gestures on resource-constrained wearable devices remains challenging. This paper presents a minimalist neural network architecture specifically optimized for knitted capacitive touch interfaces. Our approach efficiently recognizes single and multi-touch gestures including taps, swipes, and pinches with accuracy exceeding 90% on training data and 80% on testing data. To assess real-world usability, we conducted a comparative user study evaluating participant performance with our knitted interface against a conventional trackpad in a gesture-controlled gaming scenario. Results demonstrated comparable overall performance between both interfaces, with participants achieving similar game scores despite the novelty of the textile interface. Statistical analysis revealed rapid user adaptation to the textile interface, with performance stabilizing after initial trials while the standard trackpad showed continuous improvement throughout testing. Quantitative metrics were supplemented by qualitative feedback highlighting the comfort and tactile appeal of the textile interface. This work advances the practical deployment of smart textile gesture recognition systems by addressing both technical performance requirements and usability considerations for real-world applications.

“Recognizing Complex Gestures on Minimalistic Knitted Sensors: Toward Real–World Interactive Systems” – 2023 | arxiv page | pdf

Developments in touch-sensitive textiles have enabled many novel interactive techniques and applications. Our digitally-knitted capacitive active sensors can be manufactured at scale with little human intervention. Their sensitive areas are created from a single conductive yarn, and they require only few connections to external hardware. This technique increases their robustness and usability, while shifting the complexity of enabling interactivity from the hardware to computational models. This work advances the capabilities of such sensors by creating the foundation for an interactive gesture recognition system. It uses a novel sensor design, and a neural network-based recognition model to classify 12 relatively complex, single touch point gesture classes with 89.8% accuracy, unfolding many possibilities for future applications. We also demonstrate the system's applicability and robustness to real-world conditions through its performance while being worn and the impact of washing and drying on the sensor's resistance.

MEOWS

“The Motion Enhanced Off-limits Water Spray”

Award Winning Senior Design Project

Born from the frustration of trying to keep my two lovely cats off my kitchen counter, MEOWS is a modern smart home appliance that uses machine learning to detect when your furry friend is on your counter before aiming at them and spraying them with a small stream of water.

My team and I are absolutely honored to have been awarded second place in the 2024 Drexel Engineering Senior Design Championships.

To read the code & learn more, Check out the Github Repo!

Developed for my engineering senior design project, this prototype of MEOWS is built from a 3D-printed chassis and an electric water gun. It aims using stepper motors and is powered by a Raspberry Pi 4b. It sees with a wide angle camera and an infrared motion sensor.

![Me [left] with my partners Abhi [center] and Ben [right]](https://images.squarespace-cdn.com/content/v1/667ae2a45711212c8a85ecee/4ee5124c-b9d9-4ade-9a20-9fe05459dcaa/tempImageFhvJiH.jpg)

Me (left) with my friends/teammates Abhishek Dave (center) and Ben Esposito (right). Not shown in this photo are our teammates Jack Pinkstone and Noah Robinson.

See MEOWS in action!

With Ducky:

Technical demonstration:

Meeting The Woz